How Does REST API Integration for Automated Scraped Data Delivery Reduce Manual Processing Time?

May 12

Introduction

Organizations relying on high-volume web data often struggle with the delays created by manual file transfers, spreadsheet handling, and repetitive validation tasks. When data is collected from multiple digital sources, processing and distributing it manually increases operational complexity and slows strategic decision-making. Teams often spend hours formatting outputs, correcting errors, and sending updates across systems before insights become usable.

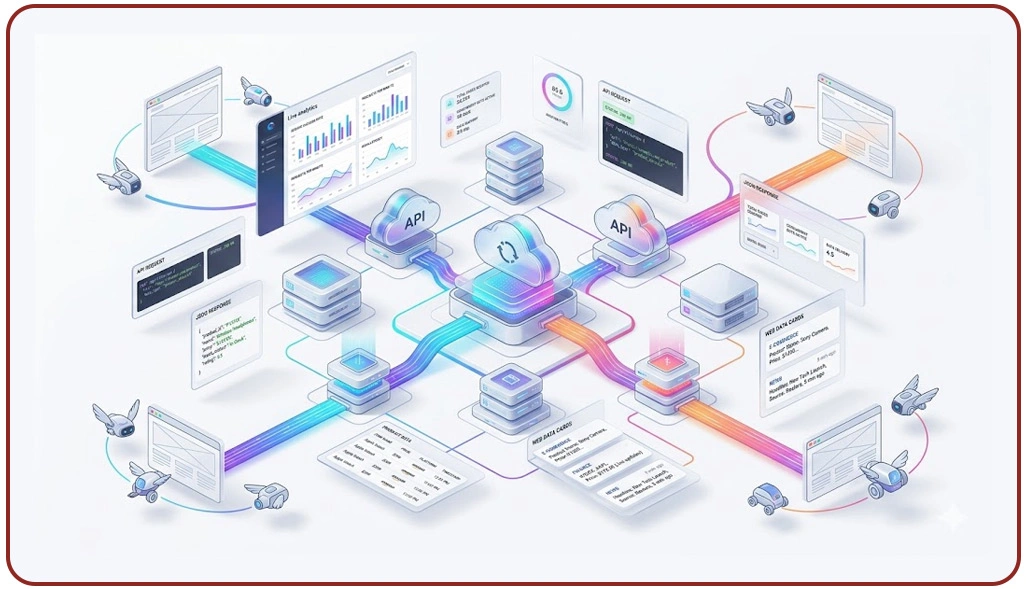

One effective approach is REST API Integration for Automated Scraped Data Delivery, which creates a direct bridge between extraction systems and business applications. By moving data through secure endpoints, organizations eliminate repetitive downloads and manual exports while maintaining consistent formatting. This transformation allows teams to focus on analysis instead of repetitive operational tasks.

A modern Scraping API simplifies the movement of structured data between crawler systems, dashboards, and client platforms. Businesses handling retail, finance, travel, and media intelligence increasingly depend on automation to ensure fresh data reaches stakeholders without delays. As operations scale, integrating APIs into scraping workflows creates a dependable structure for faster access, reduced errors, and measurable productivity improvements.

Eliminating Delays Through Connected Data Movement Systems

Organizations collecting web data from multiple digital sources often struggle when raw outputs must be manually organized before reaching decision-makers. Teams spend time downloading files, validating records, cleaning duplicates, and distributing spreadsheets to clients or internal platforms. These repetitive steps slow reporting and create inconsistencies between teams. This is where API-based workflows become essential for reducing processing cycles.

A major challenge in large-scale extraction projects is maintaining synchronized data delivery between analysts, clients, and reporting systems. Manual transfer often results in delays of several hours, especially when datasets come from multiple locations. Organizations implementing Deliver Web Scraping Data to Clients Using REST APIs improve workflow continuity because information reaches stakeholders through direct endpoint access.

Businesses using Live Crawler Services also improve reporting reliability by ensuring data is delivered immediately after collection. Real-time transfers reduce waiting periods and make datasets accessible across platforms without repeated exports. In many industries, this leads to 45% faster reporting cycles and significantly lower manual workload.

| Operational Barrier | Automated Resolution |

|---|---|

| File transfer delays | Direct endpoint routing |

| Duplicate handling | System validation |

| Reporting bottlenecks | Instant synchronization |

| Team dependency | Shared access |

Organizations also prefer Web Scraping Data Pipelines With REST API Integration because it standardizes delivery while reducing processing errors. Studies indicate automation reduces manual workload by nearly 18 hours per analyst weekly. This creates measurable efficiency improvements and supports faster execution across data-driven teams.

Accelerating Decisions Through Unified Distribution Channels

Data projects often fail to deliver immediate value when reporting outputs are distributed inconsistently across departments. One team may work from updated datasets while another references outdated files, leading to reporting errors and delayed decisions. Organizations adopting Scalable Data Scraping and API Distribution Solutions improve consistency by connecting extraction systems directly with analytics tools.

Companies increasingly use API Architecture for Real-Time Web Scraping Systems because it creates immediate communication between extraction tools and business applications. Instead of waiting for manual uploads, decision-makers access validated outputs immediately. This enables faster reaction times and reduces reporting lag. Research shows companies integrating automated delivery reduce turnaround time by more than 50% in intelligence operations.

Market research teams depend heavily on timely data for Competitive Benchmarking. When extracted information is delayed, pricing analysis, trend comparisons, and market positioning become inaccurate. API-based routing eliminates these gaps and ensures continuous updates are available for strategic comparisons.

| Business Requirement | API Advantage |

|---|---|

| Shared reporting | Unified datasets |

| Faster decisions | Live access |

| Version control | Consistent source |

| Reduced rework | Standard delivery |

In addition, Secure REST API Delivery for Extracted Web Data ensures protected transmission, reducing risks associated with unauthorized access. Audit-ready logs also improve transparency. Organizations report 35% fewer reporting errors after replacing manual delivery methods with API-based systems, improving collaboration between operational, analytics, and client-facing teams.

Scaling Operational Workflows Across Enterprise Data Networks

As organizations expand their web intelligence operations, manual data handling becomes increasingly difficult to manage. Large enterprises often collect millions of records daily from multiple sources, creating a substantial workload for analysts responsible for preparing reports. Many enterprises also rely on Secure REST API Delivery for Extracted Web Data to maintain compliance while sharing sensitive outputs across distributed environments.

Businesses implementing Enterprise Web Crawling often require continuous delivery across internal dashboards, cloud platforms, and client applications. Automated endpoints support this scale by distributing outputs immediately after extraction. This removes manual dependencies and allows teams to manage higher data volumes without increasing staff.

The need for reliable automation has increased significantly in industries like retail, travel, and financial monitoring. Organizations use Scalable Data Scraping and API Distribution Solutions to manage expanding workloads while ensuring consistency across reporting systems. This improves speed and reduces operational overhead.

| Enterprise Need | Automated Outcome |

|---|---|

| High data volume | Direct routing |

| Global stakeholders | Shared endpoints |

| Frequent updates | Scheduled sync |

| Secure transfer | Controlled access |

At scale, businesses choose Web Scraping Data Pipelines With REST API Integration to support predictable workflows and stable reporting. Industry benchmarks show enterprise automation reduces operational processing costs by 40% while improving delivery reliability.

How Web Data Crawler Can Help You?

Modern organizations need more than extraction; they need seamless delivery to internal systems and client platforms. Through REST API Integration for Automated Scraped Data Delivery, we support faster movement of verified data into analytics environments, reducing delays caused by manual handling and disconnected reporting systems.

Our service approach includes:

- Building custom automated extraction workflows

- Structuring data into client-ready formats

- Connecting endpoints for instant transfer

- Supporting scheduled and live delivery

- Improving reliability across projects

- Reducing operational workload

These services help organizations scale reporting operations without adding internal complexity. Businesses seeking faster operational workflows often choose Secure REST API Delivery for Extracted Web Data to ensure every transfer remains protected, accurate, and continuously accessible.

Conclusion

Businesses reducing manual workflows often rely on REST API Integration for Automated Scraped Data Delivery to streamline reporting, improve synchronization, and minimize delays between extraction and actionable insights. This creates a faster path from raw data to operational decisions.

Organizations scaling data projects benefit from Scalable Data Scraping and API Distribution Solutions to manage growing demand while keeping reporting consistent across teams. Connect with Web Data Crawler today to automate delivery, reduce manual effort, and build a stronger data workflow.