How Does Bypass Anti-Bot Protection Using Advanced Web Scraping Improve Data Extraction by 97%?

April 30

Introduction

In today's data-driven ecosystem, extracting reliable insights from modern applications has become increasingly complex due to advanced anti-bot mechanisms. Businesses relying on Mobile App Scraping and web data extraction often encounter barriers such as CAPTCHAs, rate limits, and behavioral tracking systems that restrict automated access.

To overcome these obstacles, organizations are now focusing on Bypass Anti-Bot Protection Using Advanced Web Scraping techniques that combine intelligent automation with adaptive scraping strategies. These approaches enable seamless interaction with dynamic web environments while maintaining high success rates and minimal detection risk. From rotating IP infrastructures to human-like browsing patterns, modern scraping frameworks are evolving to handle even the most sophisticated defenses.

The ability to extract data accurately and consistently can improve operational decisions by up to 97%, especially when dealing with large-scale dynamic applications. Businesses that invest in advanced scraping techniques not only improve data availability but also ensure real-time insights, enabling faster responses to market changes. This blog explores key challenges and solutions that drive efficient data extraction despite stringent anti-bot protections.

Addressing Complex Barriers in Modern Data Extraction Systems

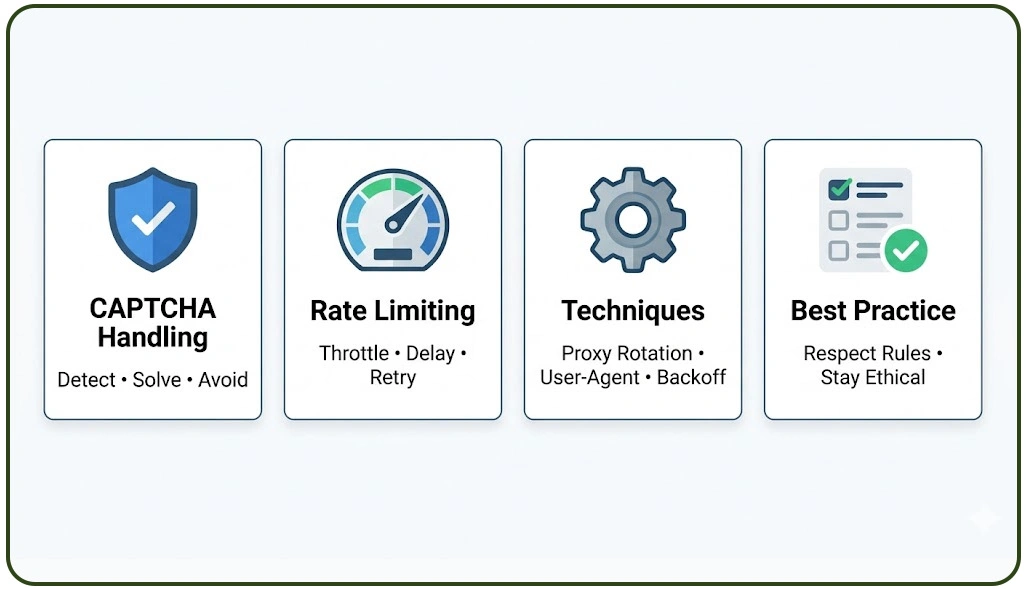

Modern web platforms deploy multi-layered defenses that significantly disrupt automated data collection processes. These systems rely on behavioral analytics, fingerprinting, and request validation to restrict non-human interactions. One of the most persistent challenges is the ability to Handle CAPTCHA and Rate Limits in Web Scraping, which directly impacts extraction continuity and efficiency.

To mitigate these issues, developers increasingly depend on a Web Scraping API that manages session handling, distributes traffic intelligently, and integrates CAPTCHA-solving mechanisms. Additionally, adaptive throttling techniques ensure that request frequency aligns with server tolerance levels, preventing sudden blocks.

Challenges and Practical Solutions:

| Challenge | Operational Impact | Recommended Approach |

|---|---|---|

| CAPTCHA interruptions | Workflow disruptions | Automated solving integration |

| Rate limiting | Reduced data throughput | Smart request scheduling |

| Script-heavy pages | Data inaccessibility | Headless browser execution |

| Bot behavior detection | IP blocking | Human-like interaction patterns |

Studies indicate that over 60% of scraping failures originate from unmanaged request patterns and CAPTCHA triggers. By implementing structured request flows and dynamic session handling, businesses can maintain higher success rates. Furthermore, monitoring tools help detect anomalies in real time and adjust strategies accordingly.

These advanced practices ensure that data extraction pipelines remain stable, scalable, and capable of adapting to evolving platform defenses, ultimately improving the reliability of collected datasets.

Scaling Intelligent Data Collection Across Protected Platforms

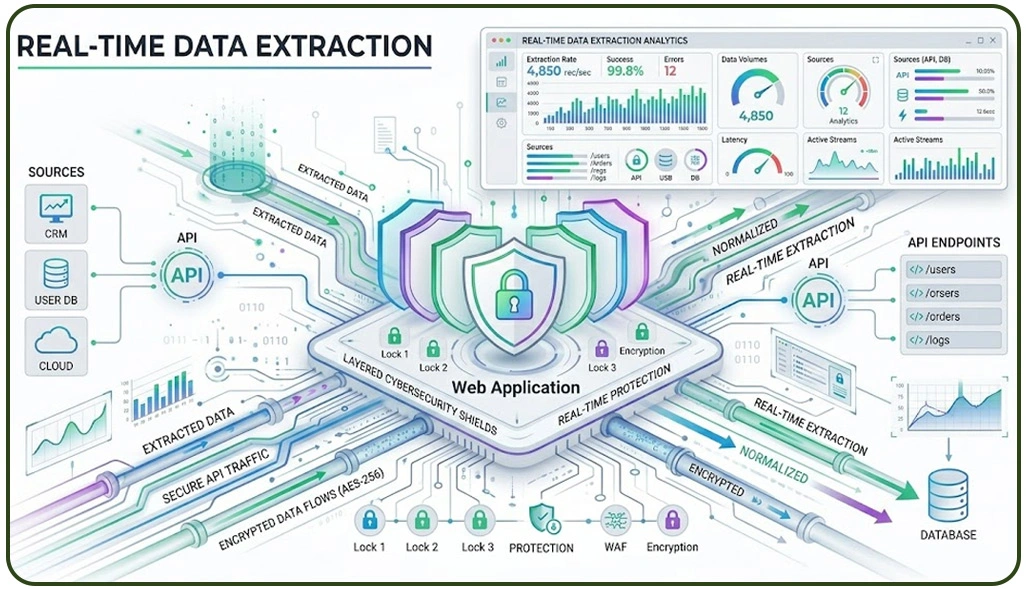

As digital platforms evolve, data extraction requires more than static scripts. The need for Real-Time Data Extraction From Protected Web Applications has become essential for businesses that rely on up-to-date insights. Static scraping methods often fail when dealing with dynamic rendering, asynchronous loading, and continuously changing data structures.

This is where AI Web Scraping Services play a critical role. These systems leverage machine learning algorithms to analyze target platforms, predict blocking patterns, and dynamically adjust scraping behavior. Instead of relying on predefined rules, AI-driven solutions learn from each interaction, improving accuracy and reducing failure rates over time.

Performance Comparison Overview:

| Metric | Conventional Methods | AI-Driven Approach |

|---|---|---|

| Data accuracy | Moderate | High |

| Blocking frequency | Frequent | Minimal |

| Adaptability | Low | Advanced |

| Processing speed | Slower | Faster |

AI-powered systems replicate human browsing behaviors such as scrolling, clicking, and timed interactions. This reduces detection risks while enabling smoother navigation across complex interfaces. Additionally, these systems can identify structural changes in websites and automatically adapt extraction logic without manual intervention.

Organizations leveraging intelligent scraping solutions report significant improvements in efficiency and data quality. This scalability allows businesses to expand their data operations without compromising reliability or performance.

Building Adaptive Infrastructure for Reliable Data Extraction

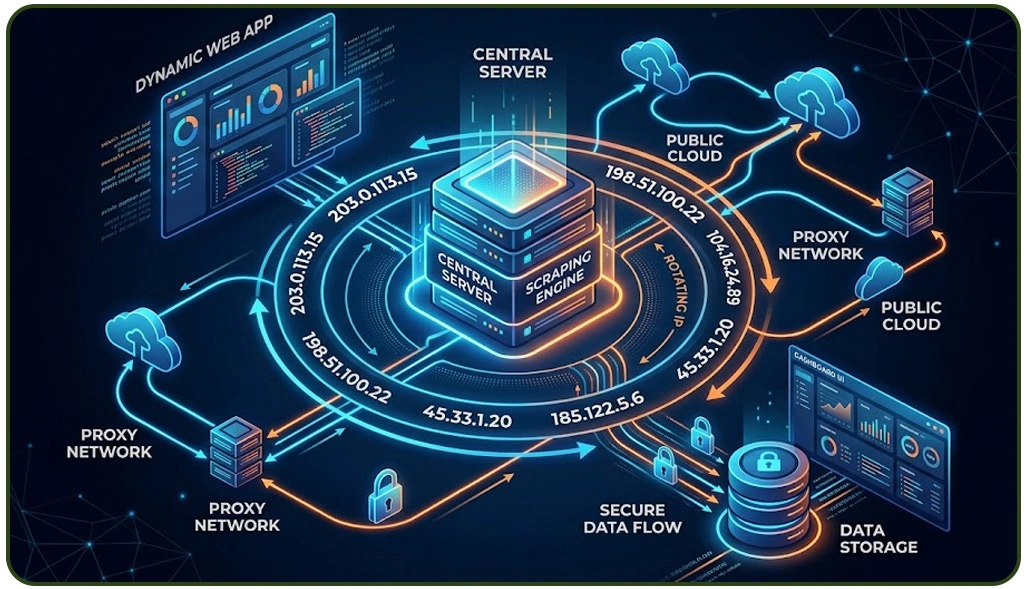

Creating a resilient data extraction framework requires a combination of intelligent design and adaptive technologies. A robust Web Crawler forms the backbone of such systems, enabling structured navigation across large-scale platforms. However, without proper infrastructure, crawlers alone cannot sustain long-term data collection in restricted environments.

One essential component is Rotating IP Scraping Solutions for Dynamic App, which distributes requests across multiple IP addresses to avoid detection. This approach minimizes the risk of bans and ensures continuous access to target platforms. By rotating IPs across different regions, businesses can also gather geo-specific insights with higher accuracy.

Infrastructure Capabilities and Benefits:

| Feature | Functional Advantage |

|---|---|

| IP rotation | Reduces detection risks |

| Proxy networks | Enhances anonymity |

| Load distribution | Improves system performance |

| Regional targeting | Enables localized data access |

Another critical capability is the ability to Extract Data From Dynamic Apps With Strong Bot Detection Systems using advanced browser automation tools. These tools replicate real user environments, allowing interaction with complex scripts and dynamic elements seamlessly.

By combining scalable architecture with intelligent automation, organizations can maintain reliable data pipelines, reduce operational risks, and achieve long-term sustainability in their data extraction efforts.

How Web Data Crawler Can Help You?

Achieving high success rates in complex environments requires a strategic approach where Bypass Anti-Bot Protection Using Advanced Web Scraping plays a central role in ensuring seamless data access and operational efficiency. We offer tailored solutions designed to handle the most advanced anti-bot mechanisms across industries.

Our Capabilities Include:

- Advanced automation frameworks for scalable extraction.

- Smart request management for consistent performance.

- Intelligent proxy handling for uninterrupted access.

- Real-time monitoring and error detection systems.

- Custom scraping workflows tailored to business needs.

- Secure and compliant data extraction practices.

In addition, our solutions are designed to support businesses looking to implement Bot Detection Avoidance Scraping, ensuring minimal disruptions and maximum efficiency even in highly protected environments.

Conclusion

Modern data extraction demands more than traditional scraping techniques, as platforms continue to evolve with sophisticated defense mechanisms. Implementing Bypass Anti-Bot Protection Using Advanced Web Scraping ensures consistent access to high-quality data while improving overall extraction efficiency and scalability.

At the same time, adopting strategies to Handle CAPTCHA and Rate Limits in Web Scraping allows businesses to maintain uninterrupted workflows and achieve higher success rates. Connect with Web Data Crawler today and take your data extraction capabilities to the next level.