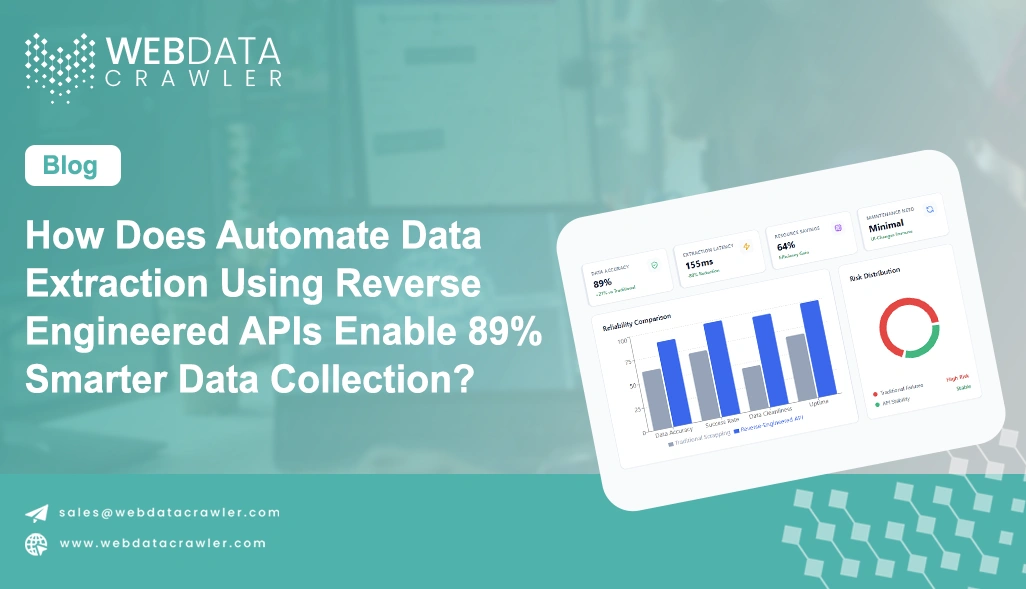

How Does Automate Data Extraction Using Reverse Engineered APIs Enable 89% Smarter Data Collection?

April 29

Introduction

Modern businesses rely heavily on accurate, real-time data to make strategic decisions, yet traditional scraping methods often fall short when dealing with dynamic mobile applications. This is where Mobile App Scraping evolves beyond conventional methods, offering deeper insights through backend access rather than surface-level extraction.

To overcome these limitations, organizations are shifting toward Automate Data Extraction Using Reverse Engineered APIs as a smarter and more efficient solution. By analyzing how mobile applications communicate with their servers, businesses can tap into structured data streams that are faster, cleaner, and more reliable. This approach eliminates the need to rely on unstable UI elements and reduces the risk of scraping disruptions caused by layout changes.

In today's competitive landscape, companies that adopt reverse-engineered API strategies can improve data accuracy by up to 89%, significantly enhancing decision-making capabilities. This blog explores how this advanced method solves real-world data challenges and empowers organizations to build robust, future-ready data pipelines.

Strengthening Backend Access to Overcome Data Extraction Challenges

Organizations often struggle with inconsistent and unreliable data when relying solely on frontend scraping techniques. Dynamic interfaces, frequent UI updates, and anti-bot mechanisms make it difficult to maintain stable data pipelines. To address this, businesses are increasingly focusing on backend-level extraction methods that provide direct access to structured datasets without depending on visual rendering.

A key advantage of this approach is the ability to Discover Hidden API Endpoints for Data Scraping, which enables access to valuable data sources that are not exposed through standard interfaces. This process is further enhanced through Mobile App Traffic Analysis for Data Extraction, allowing teams to monitor network calls and identify relevant endpoints for consistent data retrieval.

Additionally, implementing a robust Web Crawler helps automate the discovery and execution of these backend requests, ensuring scalability and efficiency across large datasets. Instead of relying on fragile UI selectors, this method ensures long-term stability and reduces maintenance overhead.

| Method | Data Accuracy | Maintenance Effort | Speed | Scalability |

|---|---|---|---|---|

| Frontend Scraping | Medium | High | Slow | Limited |

| Backend API Extraction | High | Low | Fast | High |

By adopting backend-focused strategies, organizations can significantly enhance data quality, reduce disruptions, and build a more resilient extraction framework that supports long-term growth.

Improving Data Processing Efficiency Through Structured Pipelines

Data processing inefficiencies often arise from unstructured outputs generated by traditional scraping methods. One of the most impactful capabilities in this area is the ability to Extract Real-Time Data From Mobile Apps Using APIs, ensuring that businesses always work with the most up-to-date information.

This is particularly valuable in industries where timing plays a critical role, such as e-commerce pricing, travel bookings, and financial analytics. Another important aspect is the ability to Extract Build Data Pipelines Using Reverse Engineered APIs, which allows organizations to create automated workflows for continuous data collection and processing.

These pipelines eliminate manual intervention, reduce errors, and ensure seamless integration with analytics systems. The integration of a scalable Web Scraping API further enhances this ecosystem by enabling secure and efficient data extraction across multiple platforms.

| Process Stage | Traditional Approach | Structured Extraction |

|---|---|---|

| Data Collection | Manual/Delayed | Automated/Instant |

| Data Structuring | Required | Pre-Formatted |

| Processing Time | High | Low |

| Error Rate | Moderate | Minimal |

With structured pipelines in place, organizations can transform raw data into actionable insights more efficiently, improving overall productivity and decision-making capabilities.

Scaling Data Operations with Intelligent Automation Techniques

As organizations expand their data operations, scalability becomes a critical factor in maintaining efficiency and performance. Traditional scraping methods often struggle to handle large volumes of data, leading to bottlenecks, incomplete datasets, and increased downtime. Advanced automation techniques address these challenges by enabling high-volume data extraction with minimal manual intervention.

A crucial component of scalable extraction is the ability to Bypass Frontend Scraping Using API Reverse Engineering, which allows businesses to interact directly with backend systems instead of relying on unstable user interfaces. This approach ensures consistent data access even when application layouts change, reducing the risk of disruptions.

Moreover, the integration of AI Web Scraping Services introduces intelligent automation into the extraction process. These systems can adapt to changes in API structures, optimize request handling, and improve overall efficiency over time. By incorporating machine learning capabilities, organizations can reduce manual effort and enhance the reliability of their data pipelines.

| Criteria | Conventional Methods | Automated API Approach |

|---|---|---|

| Volume Handling | Low | High |

| Adaptability | Limited | Dynamic |

| Downtime Risk | High | Low |

| Cost Efficiency | Moderate | High |

With these automation-driven strategies, businesses can build scalable and future-ready data systems that support continuous growth, improved performance, and smarter decision-making.

How Web Data Crawler Can Help You?

Organizations aiming to modernize their data strategies need a reliable partner that understands complex extraction challenges. By integrating Automate Data Extraction Using Reverse Engineered APIs into your workflows, we provide a seamless and efficient solution for collecting structured mobile app data at scale.

Key Capabilities:

• Advanced backend data extraction techniques.

• Scalable infrastructure for high-volume data needs.

• Real-time monitoring and updates.

• Secure and compliant data handling.

• Custom integration with analytics platforms.

• Continuous optimization for performance improvement.

These capabilities are further enhanced by our expertise in Discover Hidden API Endpoints for Data Scraping, ensuring comprehensive and reliable data coverage for your business needs.

Conclusion

Modern data challenges demand smarter solutions that go beyond traditional scraping limitations. Businesses adopting Automate Data Extraction Using Reverse Engineered APIs can significantly improve data accuracy, reduce operational costs, and build scalable systems for long-term success.

By implementing advanced strategies like Bypass Frontend Scraping Using API Reverse Engineering, organizations can ensure uninterrupted access to valuable insights and maintain a competitive edge. Get started today with Web Data Crawler and experience smarter, faster, and more reliable data extraction.