How to Automate Authenticated Requests in Mobile App Scraping While Achieving 99% Reliable Data Access?

April 30

Introduction

In today's data-driven ecosystem, extracting structured information from apps requires more than basic crawling. Modern applications rely heavily on authentication layers, encrypted APIs, and dynamic session handling, making Mobile App Scraping increasingly complex. Businesses seeking high-quality datasets must overcome challenges like expiring tokens, multi-factor authentication, and rate-limiting mechanisms that disrupt consistent data extraction.

To ensure continuity, organizations must Automate Authenticated Requests in Mobile App Scraping using advanced scripting, session persistence, and intelligent request replication. According to recent industry reports, over 78% of mobile apps use token-based authentication, while nearly 65% deploy rotating session identifiers, making traditional scraping approaches unreliable.

The need for stable workflows has pushed companies toward automated authentication handling, where scripts mimic real user behavior while maintaining secure access. This blog explores practical strategies to streamline authenticated scraping processes, reduce failures, and maintain consistent data availability across multiple app environments.

Addressing Complex Authentication Layers in Modern App Data Systems

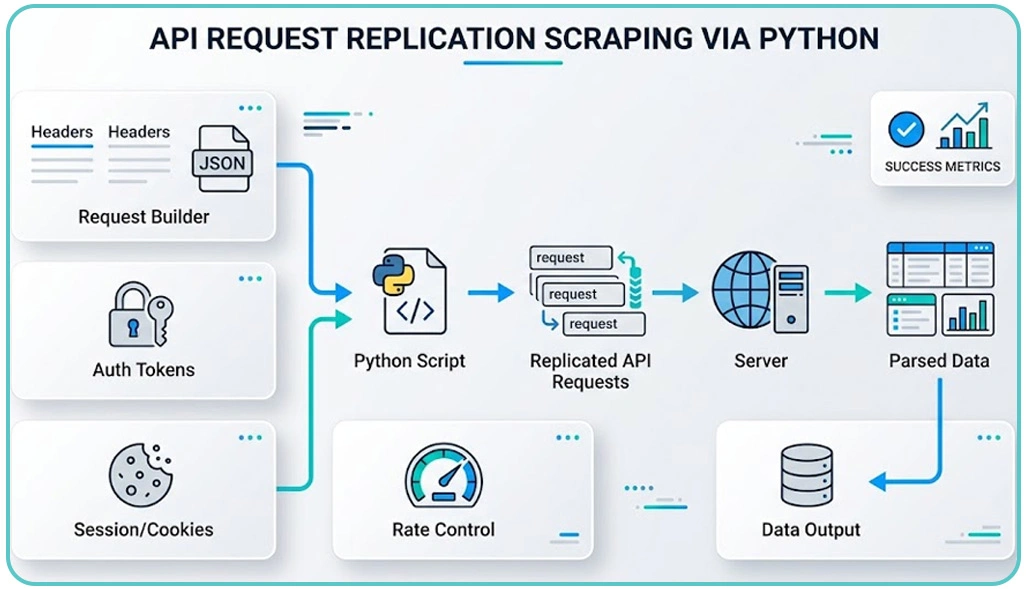

Handling authentication barriers remains one of the most critical aspects of extracting structured data from applications. Modern apps rely heavily on dynamic tokens, encrypted headers, and session-based access, which makes traditional extraction methods ineffective. A practical way to overcome this challenge is by implementing API Request Replication Scraping via Python, where developers capture real-time request patterns and replicate them programmatically. This ensures that backend APIs can be accessed without relying on front-end interactions.

When working with a Scraping API, it becomes essential to understand how headers, payloads, and cookies interact within each request cycle. Even small inconsistencies in request structure can lead to failed responses or blocked access. Therefore, precision in request replication plays a vital role in maintaining continuity.

Authentication Handling Metrics:

| Parameter | Typical Behavior | Impact on Data Workflow |

|---|---|---|

| Token Expiry Duration | 5–30 minutes | Frequent interruptions |

| Session Dependency | High | Increased complexity |

| API Request Limits | Variable | Throttling challenges |

| Security Layers | Multi-level encryption | Requires advanced handling |

Another critical factor is maintaining session continuity. Without automated token refresh systems, workflows can break unexpectedly, leading to incomplete datasets. By combining request replay techniques and dynamic session handling, organizations can significantly improve extraction reliability.

Overall, implementing structured authentication strategies ensures smoother operations, minimizes downtime, and supports scalable data extraction systems across multiple app environments.

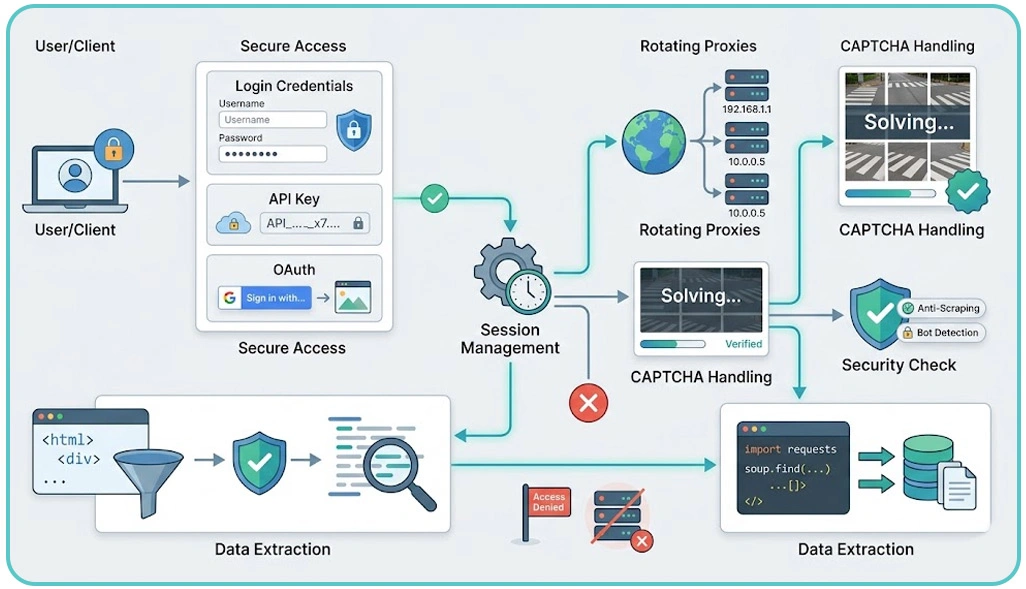

Maintaining Secure Sessions and Consistent Access Across Applications

Ensuring uninterrupted access to app data requires more than just initial authentication; it demands continuous session and credential management. One of the most effective strategies is to Manage Cookies and Tokens for Secure Data Scraping, allowing systems to maintain active sessions without repeated logins. This not only improves efficiency but also reduces the risk of detection.

Advanced automation systems store session data securely and refresh credentials dynamically. This approach helps maintain long-running workflows without disruption. Additionally, integrating Live Crawler Services allows real-time monitoring of authentication endpoints, enabling systems to adapt instantly to any changes in app behavior or security protocols.

Session Management Performance Comparison:

| Feature | Manual Handling | Automated Handling |

|---|---|---|

| Session Renewal | Re-login required | Automatic refresh |

| Workflow Continuity | Interrupted | Continuous |

| Security Implementation | Basic | Advanced |

| Error Recovery | Limited | Intelligent retry logic |

Another widely adopted approach is Scraping Data From Apps With Token-Based Authentication, where scripts dynamically capture and reuse tokens during execution. This minimizes login frequency and ensures stable access throughout the process.

Security remains a key concern. Proper encryption, secure storage, and controlled access to authentication data are essential to prevent vulnerabilities. By implementing structured session handling frameworks, organizations can achieve reliable, secure, and scalable data extraction processes that adapt to evolving app ecosystems.

Developing Scalable Pipelines for Reliable Business Data Insights

Once authentication and session handling are stabilized, the focus shifts toward building scalable pipelines that deliver consistent and accurate data. Organizations depend on app-derived datasets for analytics, forecasting, and Market Research, making reliability a top priority.

To achieve this, teams must follow Best Practices for Handling Authentication in Web Scraping, which include monitoring API changes, implementing distributed systems, and maintaining fallback mechanisms. These strategies help prevent disruptions and ensure continuous data flow even when app structures evolve.

Another critical capability is Login-Protected Data Scraping, which enables access to restricted or user-specific datasets. This requires secure credential management and precise request execution to maintain compliance with app policies while ensuring data accuracy.

Scalability and Reliability Metrics:

| Metric | Traditional Approach | Optimized Approach |

|---|---|---|

| Data Accuracy | 70–80% | 95–99% |

| System Downtime | Frequent | Minimal |

| Scalability | Limited | High |

| Processing Efficiency | Moderate | Optimized |

Automation also enables parallel processing, allowing data extraction across multiple endpoints simultaneously. This significantly improves speed and reduces latency in data delivery. By integrating monitoring tools and adaptive logic, businesses can quickly respond to API changes and maintain uninterrupted workflows.

Ultimately, a well-structured pipeline ensures consistent data quality, supports large-scale operations, and enables organizations to make informed decisions based on reliable insights.

How Web Data Crawler Can Help You?

Handling authentication complexities requires expertise, automation, and scalable infrastructure. Our solutions are designed to Automate Authenticated Requests in Mobile App Scraping while ensuring secure and uninterrupted data workflows across multiple platforms.

We provide end-to-end support that simplifies complex scraping challenges and enhances data reliability:

- Advanced request replication for accurate API interaction.

- Automated session handling with dynamic token refresh.

- Secure data extraction aligned with compliance standards.

- Intelligent retry mechanisms to prevent data loss.

- Scalable architecture for high-volume data processing.

- Real-time monitoring and adaptive scraping logic.

Our approach focuses on delivering consistent, high-quality datasets tailored to your business needs. From small-scale extraction to enterprise-level deployments, we ensure seamless integration with your analytics systems.

In the final stage of implementation, we also incorporate Manage Cookies and Tokens for Secure Data Scraping to maintain secure and stable access across evolving app environments.

Conclusion

Modern app ecosystems demand advanced strategies to maintain consistent data access. Businesses that implement automated workflows can Automate Authenticated Requests in Mobile App Scraping effectively, ensuring high reliability and reduced operational disruptions.

Incorporating techniques like API Request Replication Scraping via Python allows teams to streamline authentication handling while maintaining accuracy and efficiency. Get started today with a Web Data Crawler scalable solution designed to transform your data extraction capabilities and drive measurable business outcomes.